Software development teams are becoming more and more strained as they are required to deliver features fast while maintaining stability and quality in commercial environments. Code changes are automatically transformed into a live system using CI/CD pipelines that replace manual processes, which create delays and errors. These automated systems help organizations to deliver software upgrades frequently and quickly. With the minimization of human errors as well as enhancing efficiency of operations due to the uniformity of deployment procedures that are repeatable, knowledge of the pipeline foundations assists organizations to streamline development methods, quicken the speed of delivery and product dependability.

1.Automating Code Integration and Validation

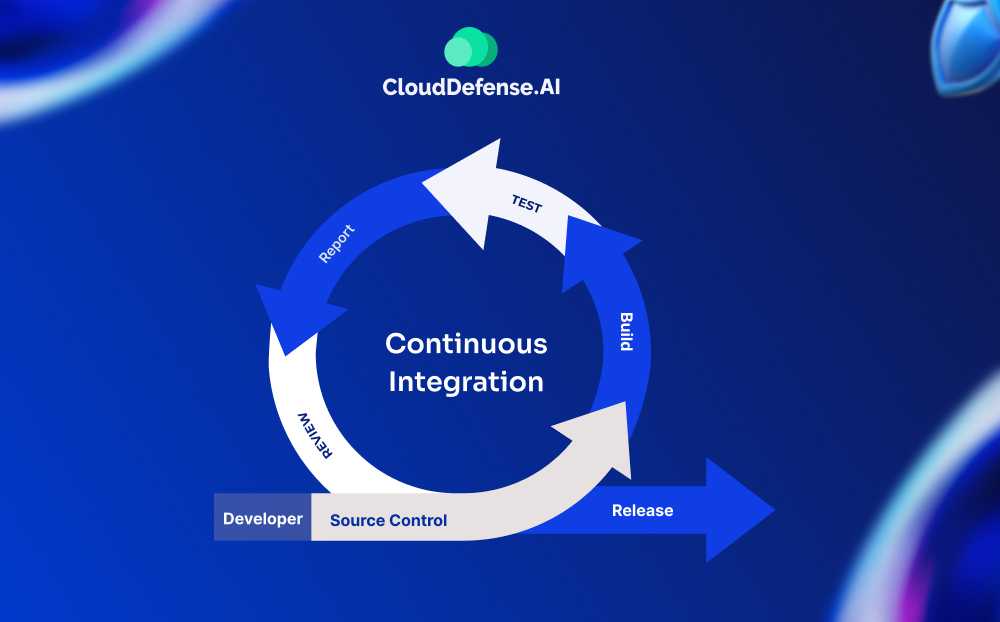

The integration problems that arise when teams operate independently are avoided by integration pipelines, which automatically combine code contributions from several engineers into shared repositories. Automated builds detect syntax problems and dependency issues before they spread throughout development teams by compiling source code instantly following each contribution. During builds, unit tests run automatically to verify that newly added code works well and hasn’t accidentally interfered with already-existing functionality. Without requiring manual review, static code analysis techniques check contributions for security flaws, poor code quality, and standards violations. Instead of finding errors weeks later during integration phases, developers may address issues while context is still fresh thanks to this continuous validation’s quick feedback.

2.Establishing Consistent Build and Testing Environments

Pipelines eliminate the discrepancies that lead to unexplained failures across many developer machines by establishing uniform conditions for software development and testing. By eliminating ambient factors as potential causes of failure, containerization technologies guarantee that the same dependencies, configurations, and system settings are present everywhere code runs. By spinning up new testing infrastructure for every pipeline execution, automated environment provisioning avoids configuration drift and contamination from prior test runs. Instead of creating uncertainty about deployment results, successful pipeline executions in reproducible conditions consistently predict production behavior. Maintaining consistency significantly cuts down on debugging time spent looking at environment-specific problems that aren’t actual program flaws.

3.Accelerating Feedback Loops for Developers

Instead of requiring extensive debugging sessions later, rapid pipeline execution gives developers fast feedback regarding the quality of their code, allowing for prompt adjustments. Contributors are notified of failed builds in a matter of minutes, which enables them to address problems while keeping implementation details fresh in their minds. Instead of necessitating a thorough investigation, automated test results point out just which functionality was broken. Performance benchmarks show whether modifications reduced system speed, memory utilization, or resource consumption below allowable limits. Quick feedback cycles keep development moving forward by keeping work-in-progress from piling up behind unnoticed issues that impede entire teams.

4.Enabling Frequent and Reliable Releases

Automation of deployment has assisted businesses to implement software upgrades several times daily rather than experience long manual deployment procedures. Automated deployment scripts will ensure that human error, which leads to production incidents during manual release, are not involved and are employed to consistently perform the same tasks. Safety nets are provided by rollback capabilities, which automatically reverse faulty releases when health checks find problems after deployment. By transferring traffic between environments instead of directly updating production systems, blue-green deployment techniques reduce downtime. Release frequency changes from infrequent, dangerous occurrences to regular processes that consistently provide users with additional value.

5.Improving Collaboration and Visibility

Instead of information staying isolated within teams, pipeline dashboards offer insight into development progress, and build statuses, along with deployment operations across entire enterprises. The stakeholders monitor the release pipelines without getting in the way of the developers and can easily tell the specific features that are approaching production environments. Automated messages can inform the relevant parties about a build failure or a successful deployment or issues that require correction without any manual status reporting. In order to support compliance requirements and incident investigations, audit trails precisely record which code versions were deployed when. Improved visibility lowers the coordination overhead typically needed for software releases while promoting accountability.

结论

Software delivery is essentially transformed from manual, error-prone processes into automated, dependable operations through continuous integration and deployment pipelines. By lowering risk, getting rid of manual dependencies, and using agentic AI to optimize complicated application lifecycles, Opkey assists businesses in streamlining CI/CD execution. Opkey, which was specifically designed for large-scale applications, offers quantifiable IT cost reductions, enhanced reliability, and quicker deployments without increasing operational costs. It guarantees that continuous delivery pipelines remain dependable, effective, and in line with corporate objectives by fusing deep domain intelligence with intelligent automation.